Blog

How We Built Responsible AI in Mirror Journal

At a glance: The Mirror AI philosophy

- The bridge, not the destination: AI can help people organize and reflect on their thoughts; it is not a replacement for therapy.

- Intentional friction: We prioritize “off-ramps” over increased engagement. When a Mirror journaler is in crisis, the app guides them toward human support rather than more time in the app.

- Developmental safety: Generative ‘remixes,’ a common AI feature where creative outputs are reinterpreted to provide fresh perspectives, are disabled for all users under 18 to prevent the inappropriate validation or reinforcement of distress.

- Architectural privacy: Safety is engineered into the technology rather than added later as a policy or feature.

In developing Mirror, a digital journaling tool from the Child Mind Institute, we didn’t set out to build just another mental health app. We took it as a challenge to answer a deeper ethical question: When someone shares their most vulnerable thoughts with an algorithm, how can the technology best serve as a bridge to meaningful human connection?

Our development of digital tools to advance scientific discovery and innovation in youth mental health aims to change the way health care technology is built — responsibly and with clinical validation. In an industry defined by prioritizing engagement and “moving fast and breaking things,” we are focused on safety and human-centered design.

AI support is not a substitute for human connection

Technology should expand access to support, not create the illusion of it while leaving people isolated. AI can reflect language, but it cannot relate to or empathize with us.

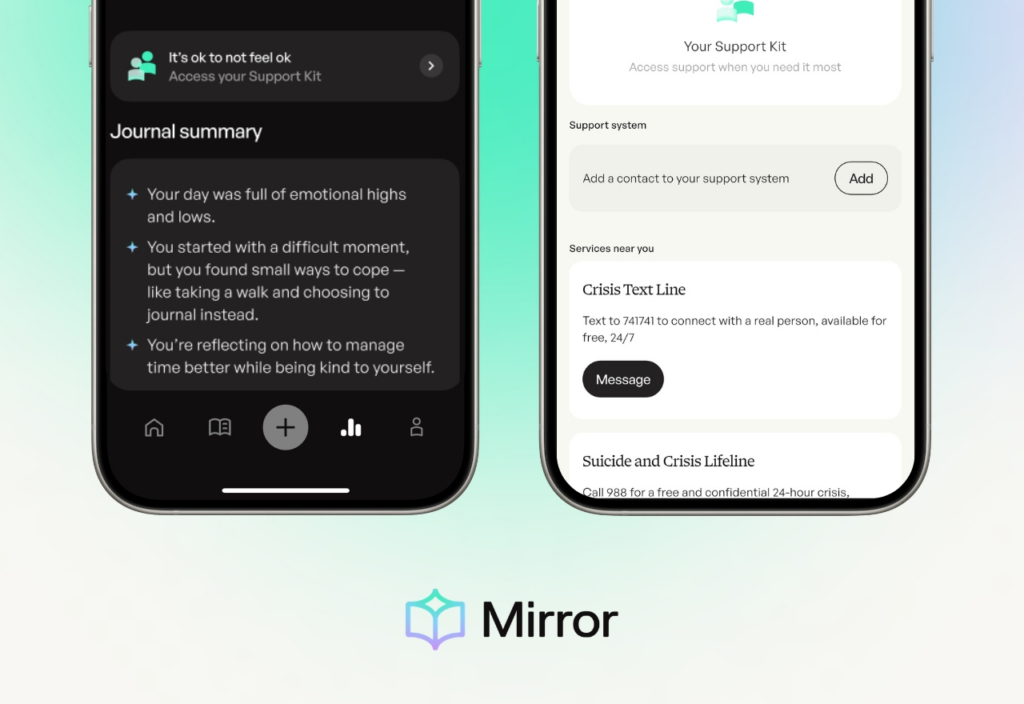

Mirror’s AI functions as a bridge from private journaling to human connection. Its role is to provide users with AI-generated reflections and summaries to help them organize their thoughts so they are better prepared for the next step — whether that’s a conversation with a therapist, a caregiver, or a peer.

Erring on the side of sensitivity

Mirror is not a diagnostic or treatment tool; its safeguards are designed to route users toward human support, not to assess them or intervene clinically. When designing crisis detection, we recognized that risk exists on a spectrum. We deliberately tuned our system for high sensitivity.

When a user expresses sadness or hopelessness, the entry is internally classified to ensure appropriate resources are offered.

Rather than a generic response, the system provides supportive language and direct links to clinical resources. Mirror does this without accelerating, using calm, direct language that validates the person’s experience without inducing fear.

The value of redirection: Choosing well-being over engagement

In the tech world, friction — when a user moves away from the interaction — is usually seen as a failure. Mirror takes the opposite approach. Friction is essential when it serves the user’s safety.

When high-risk entries are detected, the AI is explicitly constrained; it will not provide reflections, remixes, or summaries. In these moments, when it detects possible distress, Mirror provides an off-ramp. Because Mirror is a wellness space, not a clinical environment. A machine should never “make the call” or force a clinical intervention. Instead, we guide users; we don’t coerce them, providing one-click access to 988, the Crisis Text Line, user-provided trusted contacts, and other supports. We prioritize safety over time spent using the app and always include a simple message we created (“It’s ok to not feel ok. Access support.”) to guide the user toward care and the support kit.

Protecting the most vulnerable: The “no remix” rule

Journaling can help people view their experiences from a new angle. Mirror’s remix feature does this by using AI to reframe a journal entry in a different voice or format. For many users, this creative distance can make difficult emotions easier to examine and process.

But generative remixes carry a specific risk: the potential for AI to inappropriately validate or reinforce harmful thoughts or behaviors. We view this as a critical and differential risk for adolescents, who are still developing the frameworks needed to contextualize AI-generated content critically. For that reason, we have a firm ethical boundary: no remixes for anyone under 18.

The technology behind the safeguards: Architectural privacy

Mirror’s safety is built into its architecture, prioritizing a “trust but verify” approach to AI:

- Open source and pre-trained: We utilize pre-trained, open-source models rather than training them on user data. This helps ensure users’ personal reflections are not used to “teach” the AI or refine our algorithms.

- Single-turn interactions: Mirror uses “single-turn” interactions only. The system does not engage in back-and-forth chat, preventing “AI drift” where a model’s logic can shift or become unpredictable over a long conversation.

- Transient data processing: To ensure a high level of privacy, user journal text is processed immediately and automatically deleted shortly after processing. It is not retained for training or secondary use.

- Redundancy: We use two independent AI tools — LLM-driven and BERT-driven systems — to verify high-risk alerts, ensuring extra safety.

- LLM-driven system: an AI application that uses a Large Language Model (LLM) as its central “brain” to understand, reason, plan, and act autonomously, rather than just generating text.

- BERT-driven system: An AI model that uses Bidirectional Encoder Representations from Transformers (BERT) to understand, interpret, and process human language with high contextual accuracy.

Looking ahead: Reliability without boundaries

Frontier models like Gemini, Claude, and ChatGPT rely on cloud processing, which introduces inherent challenges for both privacy and offline reliability in a sensitive space like mental health. To overcome this, we are currently developing Mirror using smaller, low-parameter, open-source models that will eventually be able to disconnect from the cloud. While we are not there yet, this strategic move toward on-device processing represents a massive leap for both privacy and safety. By focusing on models designed for efficiency and transparency, we are working toward a world where:

- Data never leaves a user’s device, providing the highest possible tier of privacy.

- Detection is not limited by Wi-Fi connectivity. Safety shouldn’t depend on a signal; Mirror is being built to eventually provide support resources even when users are offline.

Moving forward

Building Mirror has reinforced that responsible AI requires continual assessment, refinement, and improvement; it is an ongoing practice. The most advanced AI in mental health is the one that knows its limits.

We are committed to doing all we can to provide evidence-based tools that help people get the support they need — because when someone shares their deepest struggles, connection to another person should always be the next step.

If you or someone you know is struggling, resources like 988 and the Crisis Text Line are available 24/7. Mirror is designed to make reaching them easier, not to replace them.